Serverless GPUs Public Preview: Run AI workloads on H100, A100, L40S, and more

Welcome to day two of Koyeb launch week. Today we're announcing not one, but two major pieces of news:

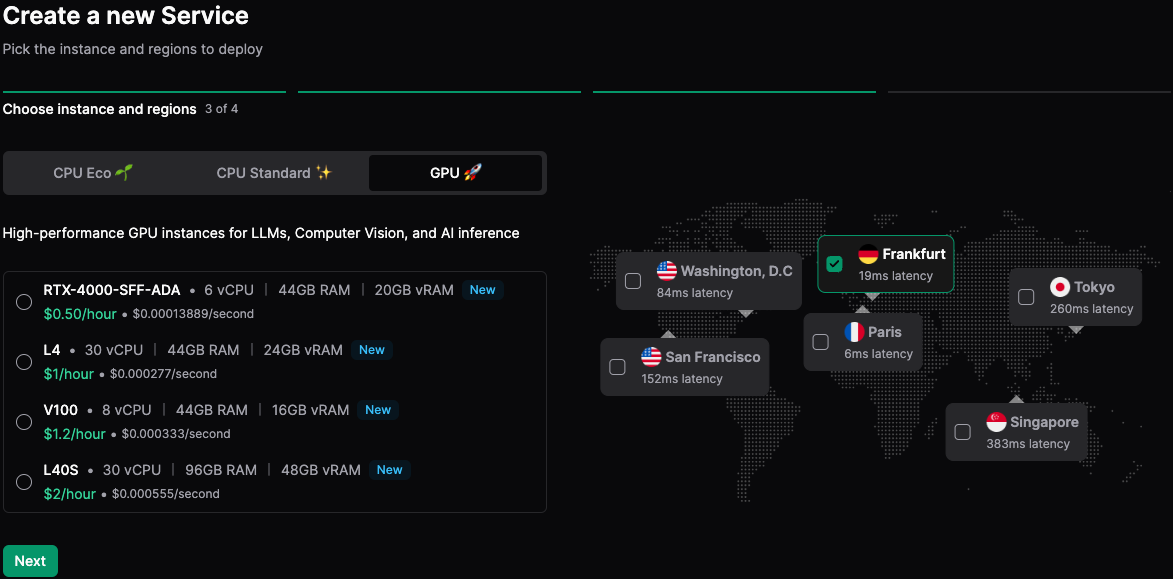

- GPUs are now available in public preview: Everyone can now deploy and run GPU-accelerated workloads on Koyeb.

- H100 and A100 access: With 80GB of vRAM, these cards are ideal for generative AI processes including large language models, recommendation models, and video and image generation.

Our lineup ranges from 20GB to 80GB of vRAM with A100 and H100 cards. You can now run high-precision calculations with FP64 instructions support and a gigantic 2TB/s of bandwidth on the H100.

| RTX 4000 SFF ADA | L4 | L40S | A100 | H100 | |

|---|---|---|---|---|---|

| GPU vRAM | 20GB | 24GB | 48GB | 80GB | 80GB |

| GPU Memory Bandwidth | 280GB/s | 300GB/s | 864GB/s | 2TB/s | 2TB/s |

| FP64 | - | - | - | 9.7 TFLOPS | 26 TFLOPS |

| FP32 | 19.2 TFLOPS | 30.3 TFLOPS | 91.6 TFLOPS | 19.5 TFLOPS | 51 TFLOPS |

| FP8 | - | 240 TFLOPS | 733 TFLOPS | - | 1513 TFLOPS |

| RAM | 44GB | 44GB | 92GB | 180GB | 180GB |

| Dedicated vCPUs | 6 | 15 | 30 | 15 | 15 |

| On-demand price | $0.50/hr | $1/hr | $2/hr | $2.70/hr | $3.30/hr |

With prices ranging from $0.50/hr to $3.30/hr and always billed by the second, you'll be able to run training, fine-tuning, and inference workloads with a card adapted to your needs.

Just like any other Instances, GPUs can autoscale based on different criteria including requests per second, concurrent connections, P95 response time, and CPU & memory usage. This provides a fast, flexible, and cost-effective way to optimize your GPU usage and handle traffic spikes.

To get started and deploy on GPU-based Instances, go to the Koyeb control panel and hit the Create Service button.

If you need several GPUs, don't be shy! Schedule an onboarding session and we will grant $200 of credit to your account for a test run.

Book an onboarding session and start with $200 of credit!

Get started with GPUs

To get started and deploy your first service backed by a GPU, you can use the Koyeb CLI or the Koyeb Dashboard.

As usual, you can deploy using pre-built containers or directly connect your GitHub repository and let Koyeb handle the build of your applications.

Here is how you can deploy an Ollama service in one CLI command:

koyeb app init ollama \

--docker ollama/ollama \

--instance-type l4 \

--regions fra \

--port 11434:http \

--route /:11434 \

--docker-command serve

That's it! In less than 60 seconds, you will have Ollama running on Koyeb using a L4 GPU.

You can then pull your favorite models and start interacting with them.

Seamless Experience for GPUs

Build, deploy, and scale your AI workloads with the best infrastructure on the market and a seamless serverless experience.

Koyeb GPUs come with the same serverless deployment experience you've come to expect on the platform with one-click deployment of Docker containers, built-in load-balancing, and seamless horizontal autoscaling, zero downtime deployments, auto-healing, vector databases, observability, and real-time monitoring.

By the way, you can pause your GPU Instances when they're not in use. This is a great way to stretch your compute budget when you don't need to keep your GPU Instances running 24/7.

Serverless GPUs public preview is the second announcement in a 5-day event of amazing releases!

What's next?

Our goal is to let you easily build, run, and scale on the best accelerators using one unified platform. Our key focus for GPUs in the coming weeks is to enable scale-to-zero and improve autoscaling performance..

Are you looking for unique hardware configurations, specific GPUs, or specialized accelerators? We'd love to hear from you. We're currently adding more GPUs and accelerators to the platform and are working closely with early users to design our offering. Let's get in touch.

Best Serverless GPUs to Run Your AI Applications

To get started with Koyeb, you can sign up and start deploying your first Service today. If you want to dive deeper into our GPUs, have a look at the documentation.

Wishing you and your apps blazing-fast deployments! 🚀

Deploy your AI workloads on the best GPUs and accelerators for your workloads. Benefit from built-in autoscaling, autohealing, and more.

Keep up with all the latest updates by joining our vibrant and friendly serverless community or follow us on X at @gokoyeb.